This year marks the 60th anniversary of the first Dartmouth Conference, the research project that is credited with introducing the term ‘Artificial Intelligence’ (AI) to the world.

Clearly, AI isn’t a new concept, and Facebook’s announcement that it will enable businesses to deliver automated customer support, online shopping guidance, content and interactive experiences to its users through chatbots is the latest iteration of the technology.

As with most news from Facebook, the announcement has caused quite a stir – only few days later people were already hailing Bots as ‘The New Apps’!

It’s worth taking moment to look behind all the hype around chatbots and understand the technology that sits at their core. chatbots, like Apple’s Siri for example, use deep learning and neural networks to learn from data sets and apply that knowledge to future problems and tasks – in the same (although obviously far less sophisticated) way that a human brain does.

Although they are still in their development phase, chatbots are becoming more readily accepted by the general public, who probably don’t even really think of them as a form of AI. That might actually be one of the reasons that people are generally more at ease with them.

> See also: How artificial intelligence is driving the next industrial revolution

It could also be why Facebook judges that the time is right for stepping the technology up a gear. After all, if you’re comfortable in ‘talking’ to Google or Siri, then it isn’t such a big step to imagine engaging with a chatbot in other guises such as online shopping assistants, customer service advisors and help desk support.

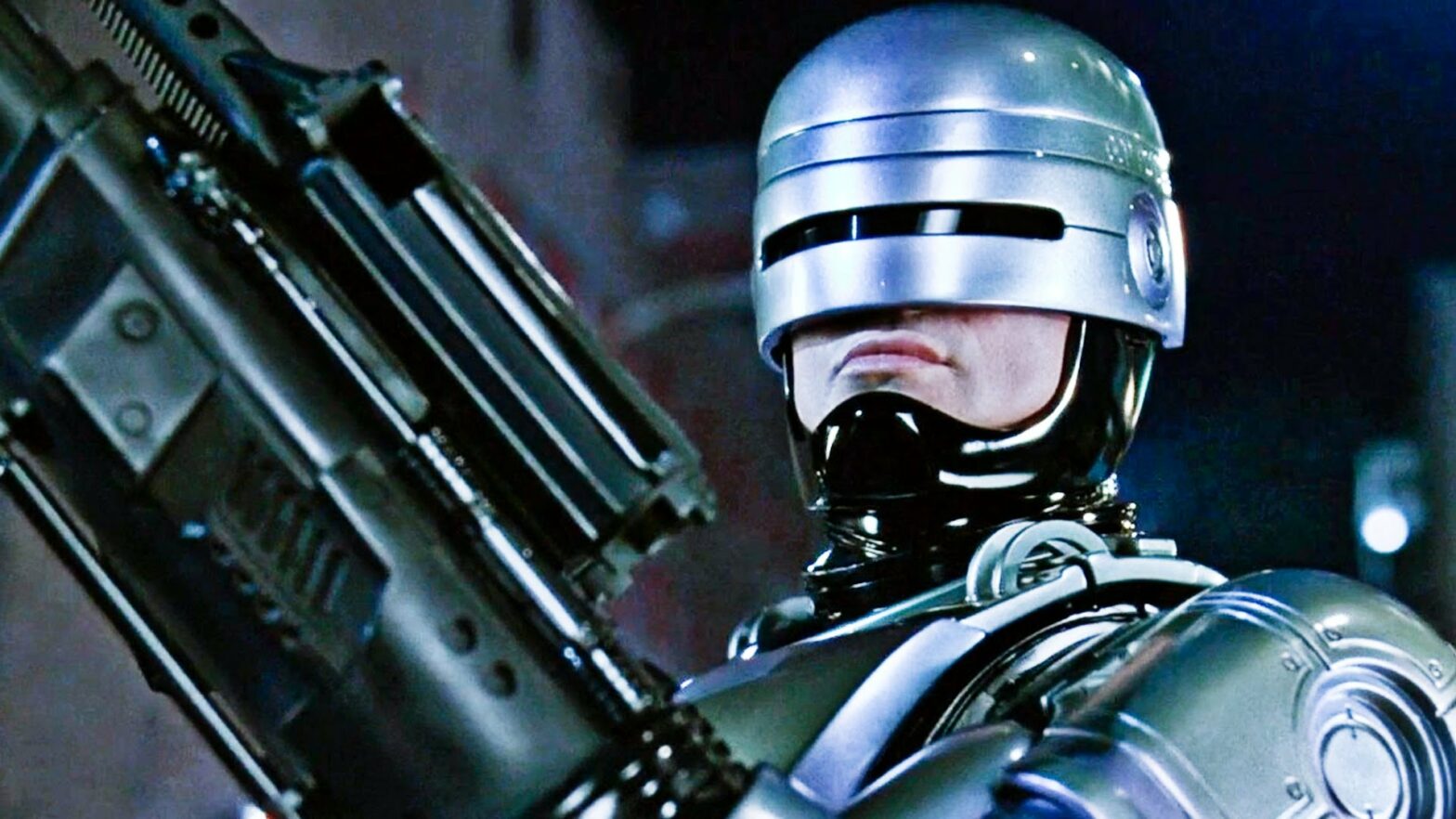

How long will it be, then, before someone coins the term ‘BotRage’ when they come face to face with an unyielding customer service bot? What happens when the computer can actually ‘say no’?

But being serious for a moment, the technology behind chatbots, i.e. machine-learning pattern recognition, can also deliver some serious business benefits. For example, it’s already an established factor in fraud detection and payment protection, and work is under way to develop more sophisticated algorithms that identify threats that could be missed by humans and more traditional IT security mechanisms.

Used in this way, AI has the potential to discover abnormal behaviours that could indicate compromised software, the presence of malware or alert IT teams other forms of cyber attack.

Security experts believe that, in the future, mitigating such attacks will be dependent on this kind of AI, with the ability to detect an offence early and run the necessary counter measures. These self-learning solutions will utilise current knowledge to assume infinite attack scenarios and constantly evolve their detection and response capabilities.

Looking further into the future, the AI that underpins chatbots could be able to offer more support to IT teams, and take on some of hugely onerous tasks like penetration testing. AI could deliver a new wave of truly intelligent networks that constantly troubleshoot for potential network problems and keep the infrastructure running smoothly.

This means that IT teams should have more time to engage in monitoring and managing security scripts in much the same way as they oversee today’s automated solutions. In theory, life for the IT manager should become much more about ensuring the enterprise infrastructure is designed to be secure by default – rather than engaged in the reactive ‘patch and pray’ behaviours of today.

> See also: Why machine learning will impact, but not take, your job

But there will be an impact on the policies and procedures that IT managers employ. For example, decisions will need to be taken about how much autonomy will be handed over to AI systems and questions like ‘who will police the self-managing network?’ will need to be considered.

And, because data protection regulations don’t stay static, organisations will need to ensure these self-learning systems keep pace with changing regulatory requirements. How would an AI system deal with the abolishment of Safe Harbour and the changes required in data protection and movement, for example? Would it be able to apply the appropriate storage, backup and archiving processes to personal data?

As network managers grow more confident with AI techniques, they will become more willing to use them in more complex applications. So, as developers prepare to grapple with building bots and working with Facebook’s Bot-building partners, IT professionals in particular should be asking themselves: ‘what difference could AI make to me? Facebook has just initiated the future of AI for business. The question is, are we all ready for it?

Sourced from Michael Hack, Senior Vice President, EMEA Operations, Ipswitch