AI companies including Open AI, Google DeepMind and Meta have agreed to the UK Government testing their latest models to assess national security risks.

Other signatories to the legally non-binding deal, announced at the end of the two-day Bletchley Park AI summit, included Anthropic, Amazon and Meta.

The document was signed by other governments including Australia, Canada, the EU, France, Germany, Italy, Japan and South Korea but China was not a signatory.

The testing in the UK will be overseen by the AI Safety Institute, which the Government has said will “draw on the specialist expertise of the defence and national security community”.

AI Safety Summit: what to expect – Government officials and tech companies are set to come together for the world’s first AI Safety Summit on the 1st and 2nd November

Prime Minister Rishi Sunak said: “Until now, the only people testing the safety of new AI models have been the very companies developing it. We shouldn’t rely on them to mark their own homework, as many of them agree.

“Today we’ve reached a historic agreement, with governments and AI companies working together to test the safety of their models before and after they are released.”

Annual AI risk report

An international panel of experts will also set out an annual report on the evolving risks of AI, including bias, misinformation and more extreme “existential” risks, such as aiding in the development of chemical weapons.

The AI risk report, agreed on by 20 countries, will be modelled on the Intergovernmental Panel on Climate Change, with the first report to be chaired by Yoshua Bengio, professor of computer science at the University of Montreal.

Yoshua Bengio – ‘Powerful tech will yield concentration of wealth’ – Professor Yoshua Bengio, one of the godfathers of AI, on which sectors will be revolutionized by AI, the need for tighter regulation, and whether AI poses an existential threat

“I believe the achievements of this summit will tip the balance in favour of humanity,” said Sunak. “We will work together on testing the safety of new AI models before they are released.”

Sunak, when asked whether the UK needed to go further by setting out binding regulations, said that drafting and enacting legislation “takes time”.

The US government issued an executive order on Monday in the administration’s broadest step in tackling AI threats. The US also said this week that it planned to set up its own institute to police AI. The UK agreed partnerships with the US AI Safety Institute, as well as with Singapore to collaborate on AI safety testing.

Thursday’s announcement was the second of two major agreements brokered at the summit. On Wednesday, more than two dozen counties – all of those attending the event – signed the Bletchley Declaration, which warned of the potential for AI to bring “catastrophic harm”.

Musk predicts ‘age of abundance’

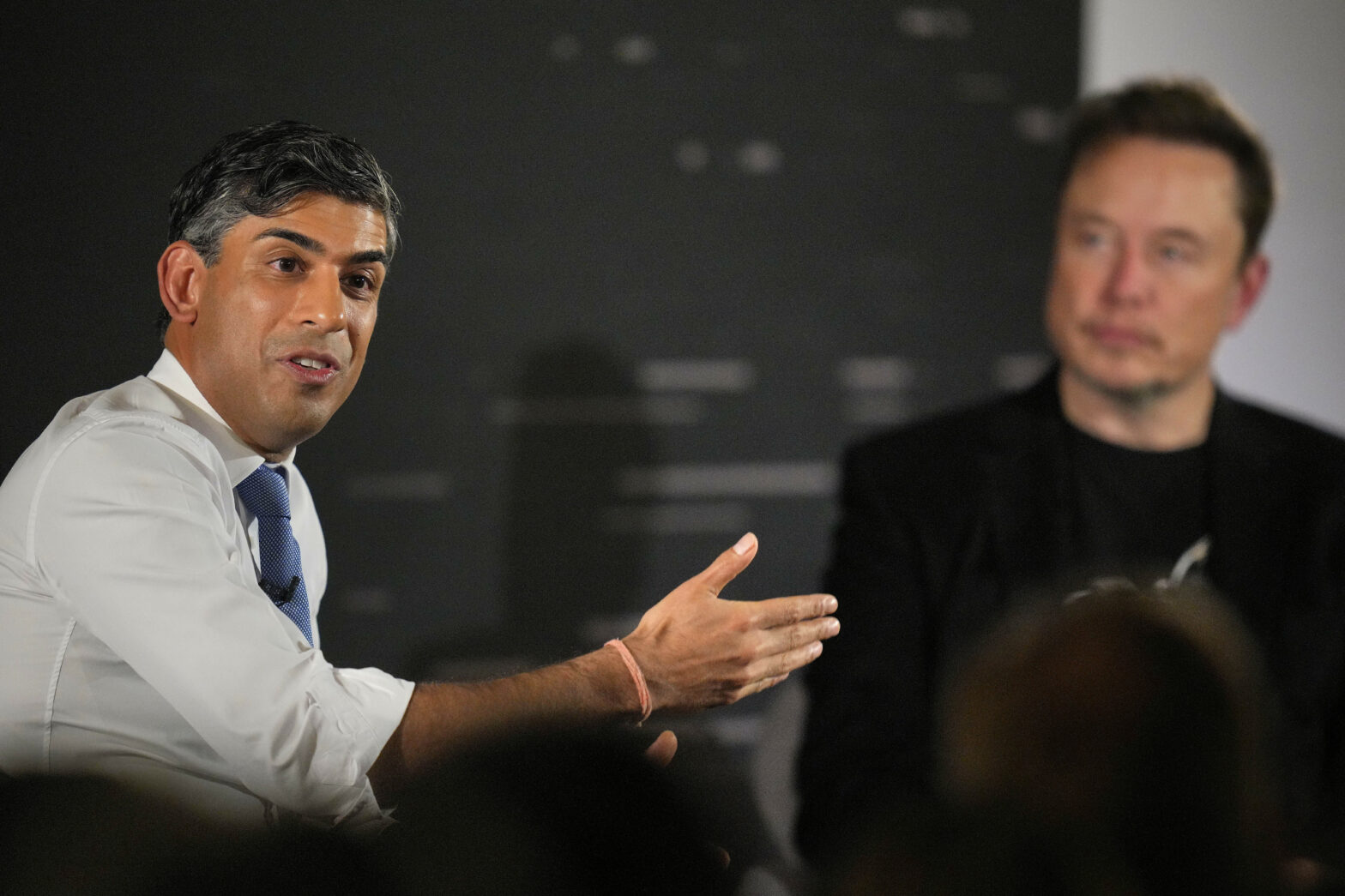

The two-day Bletchley Park AI Summit ended with Rishi Sunak interviewing Elon Musk, owner of X, Tesla and SpaceX, about his view of AI.

Asked what he thought of intergovernmental oversight, Musk said jokingly that “it will be annoying, that’s true” but said he supported the plan because “we’ve learnt over the years that having a referee is a good thing”.

The world’s richest man predicted that AI would create “an age of abundance” where nobody would have to work unless they wanted to.

“We won’t be on universal basic income,” he added, “We will be on universal high income.”

More on artificial intelligence

UK leads way when it comes to artificial intelligence investment – City of London report finds £3 billion was invested in artificial intelligence in 2022, nearly double France, Germany and rest of Europe combined