The utility computing market has yet to progress beyond the early adopter stage. But organisations taking the risk are enjoying significant benefits. The notion of utility or grid computing is nothing new. As far back as 1968, computer scientist JCR Licklider wrote that: “Interconnection will make available to all communities the programs and resources of the entire supercommunity.” Licklider’s essay helped inspire the Internet and, eventually, distributed computing.

More than three decades later, the World Wide Web is a critical component of business, but a ‘global grid’ remains a fanciful dream. Organisations employing some form of distributed computing architecture are numbered in the tens of thousands, rather than the hundreds of thousands or even millions. Of those, perhaps as many as 90% have established internal ‘grids’ made up of clusters of computers linked together by high-speed networks. Many are university research clusters. Only a tiny minority involves enterprises tapping into resources shared with other companies.

The slow progress in the commercial sector can be attributed to a number of factors, and are explored in greater detail elsewhere in the Utility Computing report. They include significant cultural barriers, inadequate cross-platform security and relatively high set-up costs, as well as some hidden costs.

But ask any early adopter, and most will play down the risks while talking up the benefits. Many will be baffled by the feet-dragging of their peers. Below are some of their experiences.

White Rose Computational Grid

Part of the UK government’s ‘E-science’ grid computing initiative, the White Rose Computational Grid got underway in summer 2002 and was given its official launch in January 2003. It shares resources across Leeds, York and Sheffield universities and is already being tapped by a small number of UK enterprises including Rolls Royce and Shell.

The hardware and software was supplied by Sun Microsystems and the project was managed by Esteem Systems, a local systems integrator. According to David Ogden, director of the Sun Microsystems business division of Esteem Systems, the regional grid comprises 184 Sun Sparc processors and 256 Intel processors within 19 servers and 128 PCs, linked together by a high-speed network that serves all of the region’s colleges and universities.

The resources are not shared out completely – only 25% is available to all three universities, with the remainder for internal use. Utilisation rates have grown steadily, from about 25% to the present 75%, and should reach 100% by May 2003. After that, the project has three options – buy new machines, grid-enable machines from the universities’ core IT infrastructures, or maintain the original grid.

Martin Doxey, chief executive of the White Rose University Consortium, which administers the project, says the grid has already been put to some sophisticated uses: research into bone disorders, fluid dynamics, pattern recognition, systems visualisation, image rendering and informatics, to name a few. Commercial enterprises cannot link directly to the grid because it was built with public money. But companies are effectively sub-contracting some of their most computing-intensive research projects to the participating universities. In the case of Rolls Royce, it gave a grant of £3 million to Leeds University to collate maintenance information about all its jet engines.

In the future, the consortium wants to launch at least one spin-out company, explore a range of billing models and expand the grid to other universities. It is also keen to generate more interest within the commercial sector. At the recent official launch, there were more than 100 attendees. Tellingly, most of them were business people, rather than academics.

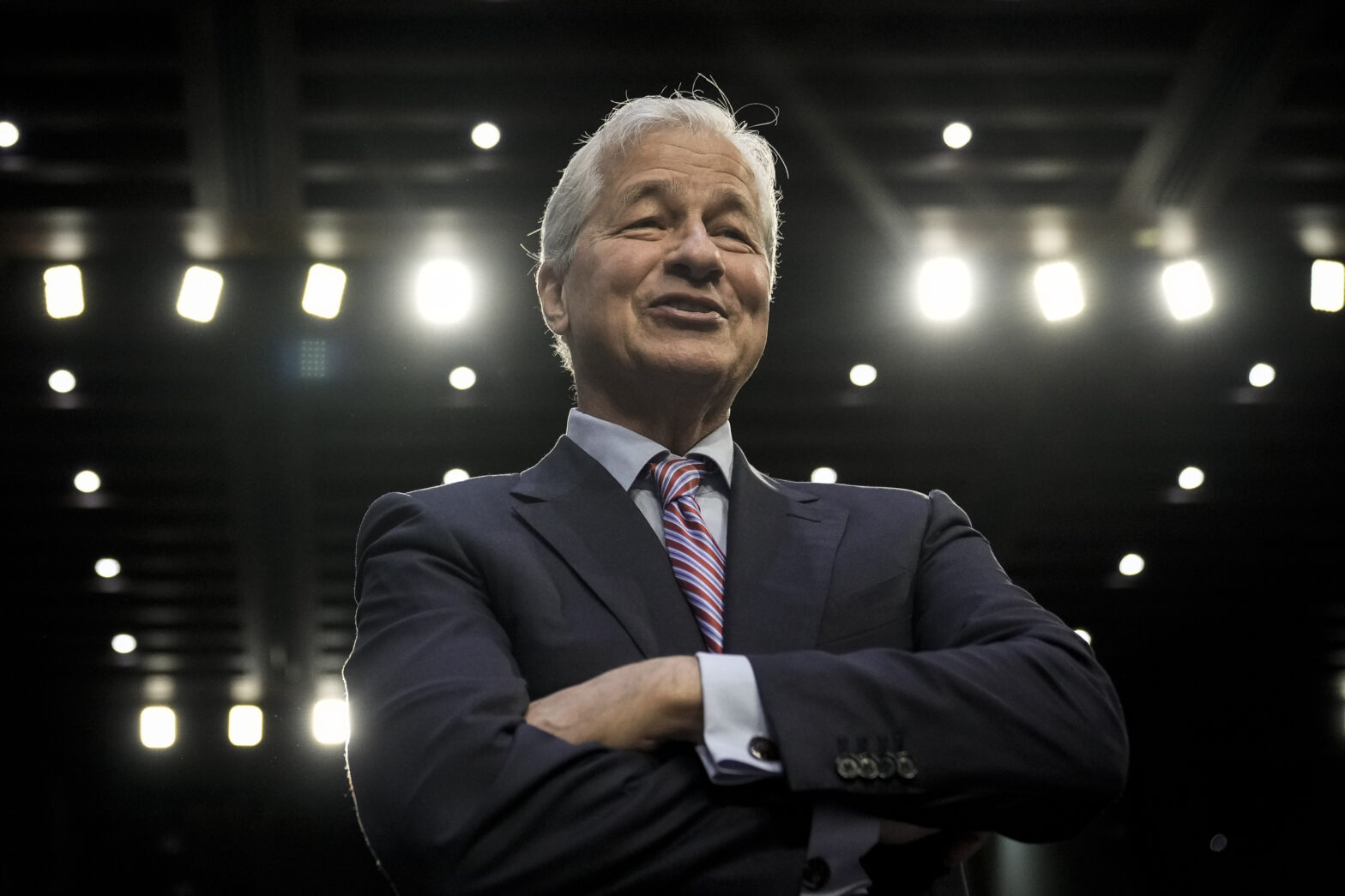

JP Morgan Chase

Executives at JP Morgan Chase, the US investment bank, spent the second half of 2002 negotiating a blockbuster, $5 billion contract with IBM’s Global Services division. Initially, say reports, this was only to have been a traditional IT outsourcing deal, but gradually the executives were won over by the notion of ‘computing on demand’, whereby JP Morgan Chase would be billed only for the computing resources it actually used. By all accounts, the bankers were unfamiliar with the notion of ‘utility computing’, and had to sit through a number of demonstrations before they were convinced.

The seven-year deal covers a substantial portion of JP Morgan’s data processing facilities, including data centre management, help desks and maintenance of data and voice networks. Under the outsourcing part, JP Morgan Chase is in the process of transferring about 4,000 employees to IBM. Among other things, they will work on creating a ‘virtual pool’ of computing resources to be deployed as needed. IBM will also deploy its ‘utility management infrastructure’ technology across the bank’s retail banking, mortgages, trading and securities processing divisions.

Some analysts have questioned the true extent of the ‘utility computing’ element of the deal, and point out that IBM has been offering usage-based pricing models to its outsourcing clients for a number of years. But executives at JP Morgan Chase are unconcerned. “IBM’s global strength and computing capabilities, delivered ‘on demand’, will help us create significant value for our clients, shareholders and employees,” says Thomas Ketchum, vice chairman of JP Morgan Chase.

Pratt &Whitney

Grid computing is a way of life at Pratt & Whitney, the aerospace-engineering division of manufacturing conglomerate United Technologies.

Its aircraft engine components power more than half of the world’s commercial fleet and propel most US Air Force fighters at the speed of sound. But the highly competitive aviation industry expects far more than power from an aircraft engine; it demands thrust at the lowest possible cost and product renewal at a rapid rate.

Back in the early 1990s, Pratt &Whitney installed Canadian supplier Platform Computing’s distributed computing software to create a pool of thousands of processors. Today, the software is running on about 5,000 Sun and Dell PCs across five locations in the US and Canada, linked by a 100Mbit/s Ethernet network.

The grid mostly runs during the night when workstations and desktops are not being used by individuals, simulating the design and development of aircraft engines and space propulsion systems. When it was first established, engineering time was cut in half and development costs reduced by almost 60%. That gave the company the capacity to execute thousands of additional analysis jobs.

But the grid is now such a part of the Pratt &Whitney business model that it is almost taken for granted. “We don’t even talk about these kinds of gains anymore,” says Pete Bradley, a company scientist. And to those businesses still sceptical about the benefits of grid computing he had the following message: “It is time to forget the scepticism and get on board – because everyone else is.”