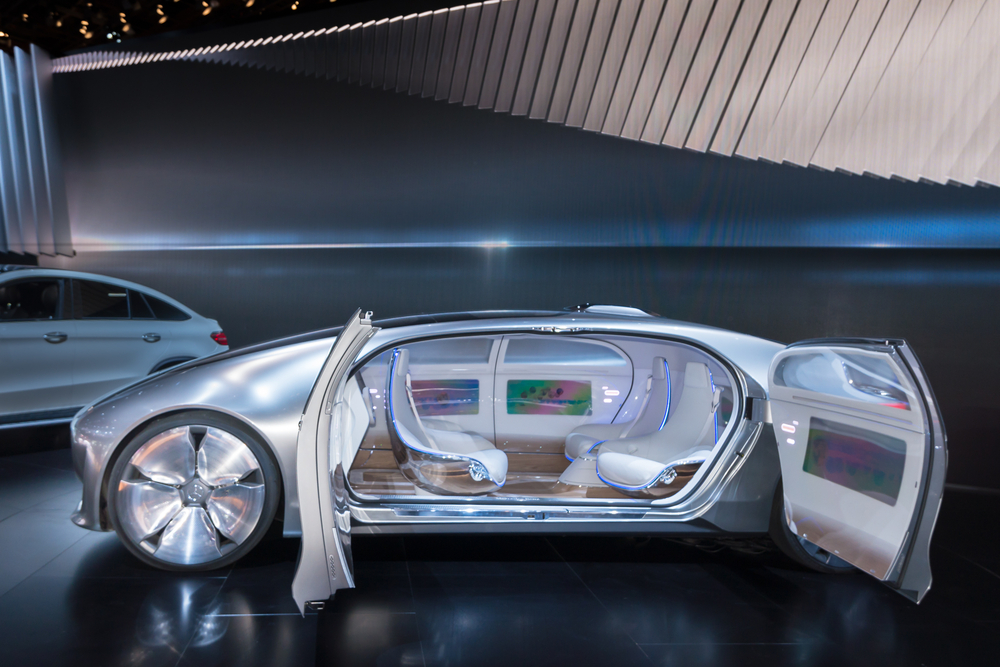

For automakers, the smart transformation poses significant challenges. It will require a new paradigm of design, supply and engineering. Data gathering and analysis, graphics processing efficiency and the pace of consumer tech innovation are among the areas newly on their agenda. Safety must remain core to the carmaker’s approach, but it will also require a robust technology strategy — one that will ultimately lead to self-driving cars.

From smartphones to smart cars

A smartphone can be found in just about every pocket or bag today. Mobile device makers are in an arms race to pack these extremely powerful computers with increasingly advanced location sensors, cameras, touchscreens, wireless connectivity and processing technologies. Rapid advancements in features, processing speeds, and battery life have come to be expected by consumers on at least a yearly basis. And due to regular software updates, the devices improve even after they’re brought home.

>See also: Are we ready for driverless cars?

This mobile revolution has raised the expectations of car buyers. After all, for most people, their car is the single most valuable consumer device they’ll ever own.

Mobile platform developers like Google are already integrating their user interface experiences into the car so consumers can be connected and online everywhere they go. In 2013, Audi, GM, Google, Honda, Hyundai and NVIDIA formed the Open Automotive Alliance to bring the Android platform to cars. The alliance is focused on using the operating system to create intuitive and simple-to-use interfaces and applications for the car. Apple has also entered the fray with its own CarPlay infotainment system.

Some automakers have also had success in creating intuitive software systems. At this year’s Consumer Electronics Show in Las Vegas, Audi received the Popular Science “Product of the Future” award for its virtual cockpit and multimedia interface, which was praised for delivering a driver-centric experience with 3D graphics and brilliant clarity in the 2016 TT and Q7. And in October 2014, Honda became the world’s first carmaker to announce an embedded Android infotainment system powered by NVIDIA.

However, despite these innovations, automaker product rollout cycles still average two to three years. This is a lifetime compared to what consumers experience with their phones and tablets. The solution for automakers is to follow the mobile device makers’ lead: integrate a highly capable hardware platform with a flexible operating system that can allow for frequent software updates.

Tesla Motors pioneered this practice, allowing new features to be added to Model S cars sitting in their garages overnight. And it can be a potential money-saver. Tesla can improve some vehicle parameters that may otherwise have required a costly recall. Personalisation of the virtual cockpit, as demonstrated in the new Audi TT, is also a key trend.

Combined with a modular approach, which empowers car manufacturers to use current-generation mobile processors in new cars, sophisticated software platforms and OTA updates will dramatically closes the capability gap between consumer electronics and in-vehicle systems.

Beyond pretty pictures

All in all, there are around 7.5 million cars on the road today utilising the same architecture you’d find powering supercomputers, computer games and blockbuster visual effects. Navigation and infotainment systems are just the beginning of what can be achieved with this level of computing power. Manufacturers are already sowing the seeds of full-scale autonomous driving but there are three critical elements that still need to be addressed.

Firstly, the need for massively high-performance processors in a low-power envelope; secondly, a modular system designed hand in hand with ease of programmability; and lastly, a safety-critical virtual cockpit or cluster, acting as a point of aggregation for the driver.

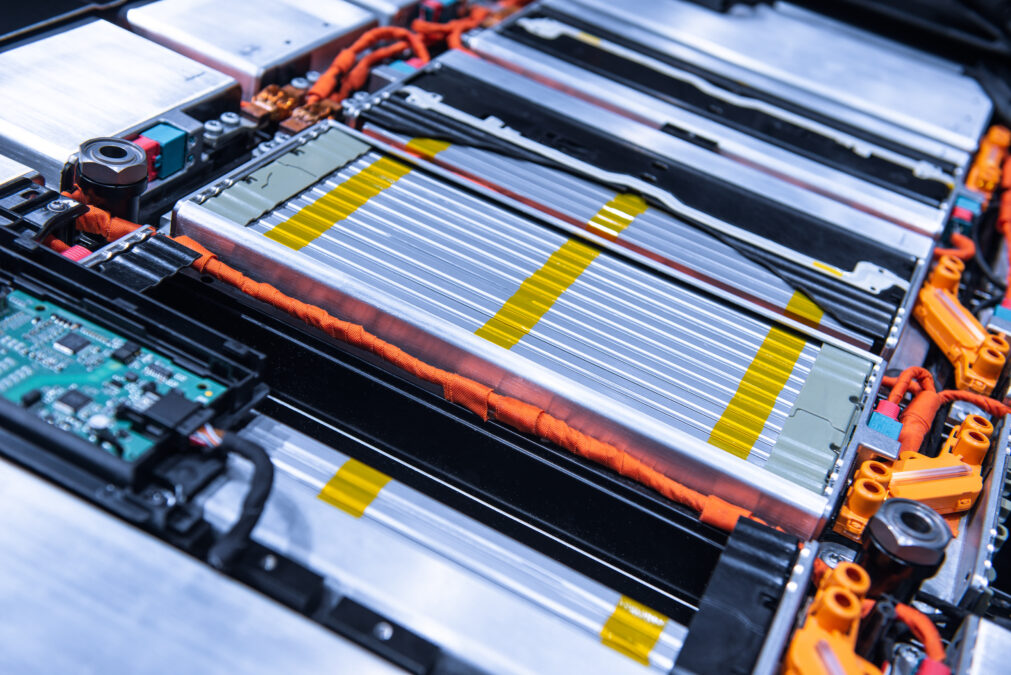

Cars already possess many sensors, from cameras and radar to laser scanners and ultrasonic sensors. The next generation of vehicles will need to go further, not just collecting but understanding this sensory information using high-performance, energy-efficient processors that can run the search, natural language processing and object recognition algorithms needed for ADAS (advanced driver assistance systems). For example, last year GM announced the adoption of an eye-tracking system in vehicles over the next five years, designed to enhance safety by recognising when drivers’ attention wanders from the road.

Processing these incredible amounts of information requires the power of a supercomputer, one that can handle thousands of computation points every second. But, like those powering smartphones, car-based processors must also be small and operate in an extremely energy-efficient manner. Cramming a desktop-sized computer into a car dashboard is not an option.

>See also: Forensics and the Internet of Things: the car of the future will be a data goldmine

On the road to autonomous driving

As the automotive industry begins to tackle these challenges and embrace the lightning cadence of consumer technology, it appears that the promise of self-driving cars is well on the way to fulfillment. Mobile technology is delivering high-power, energy-efficient performance. Computer vision applications are already on the road, with more on the way.

It’s no secret that Google and others have been road-testing autonomous cars for several years. During Audi’s keynote address at CES 2015, one of their vehicles drove itself onto the stage. And new business models are blossoming. This month, app-based transportation company Uber announced it’s building a robotics research facility to kick start autonomous taxi fleet development and there’s every possibility that these driverless cars may in fact be the future of the firm.

Naturally, the advent of truly autonomous cars must be preceded by the extensive debate, qualification and regulation that surround all life-critical technology. However, with supercomputing capability housed in increasingly small packages, the vision for future cars is bright.

Sourced from Jaap Zuiderveld, NVIDIA